Mathematics, 23.03.2020 18:29 jaydenrenee111902

Assume a media agency reports that it takes television streaming service subscribers in the U. S. an average of 6.33 days to watch the entire first season of a television series, with a standard deviation of 3.98 days. Scarlett is an analyst for an online television and movie streaming service company that targets to the 18-50 age bracket. She wants to determine if her company's customers exhibit shorter viewing rates for their series offerings. Scarlett plans to conduct a one-sample z-test with significance level of α = 0.05, to test the null hypothesis, H0: μ =633 days, against the alternative hypothesis, HI : μ < 6.33 days. The variable μ is the mean amount of time, in days, that it takes for customers to watch the first season of a television series.

Scarlett selects a simple random sample of 840 customers who watched the first season of a television series from the company database of over 25,000 customers that qualified. She compiles the summary statistics shown in the table. Sample size Sample mean Standard error

n x SE

840 6.14 0.1373

Required:

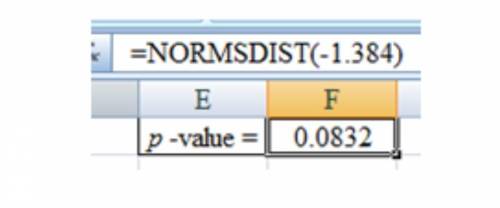

1. Compute the p-value for Scarlett's hypothesis test directly using a normalcdf function on a TI calculator or by using software.

Answers: 3

Other questions on the subject: Mathematics

Mathematics, 20.06.2019 18:04, hsjsjsjdjjd

Grandma made an apple pie. josh and his brother joe finished 4/5 of it. then 3 friends came over and shared the left over. how much pie did each friend eat

Answers: 2

Mathematics, 21.06.2019 19:00, hannahmckain

Tabby sells clothing at a retail store, and she earns a commission of 6.25% on all items she sells. last week she sold a pair of jeans for $32.50, a skirt for $18.95, and 2 blouses for $14.95 each. what was tabby's total commission? a $4.15 b $5.08 c $81.35 d $348.60

Answers: 1

You know the right answer?

Assume a media agency reports that it takes television streaming service subscribers in the U. S. an...

Questions in other subjects:

Mathematics, 07.12.2019 03:31

Mathematics, 07.12.2019 03:31

Mathematics, 07.12.2019 03:31

Mathematics, 07.12.2019 03:31

Mathematics, 07.12.2019 03:31